Sit healthier in 3 steps:

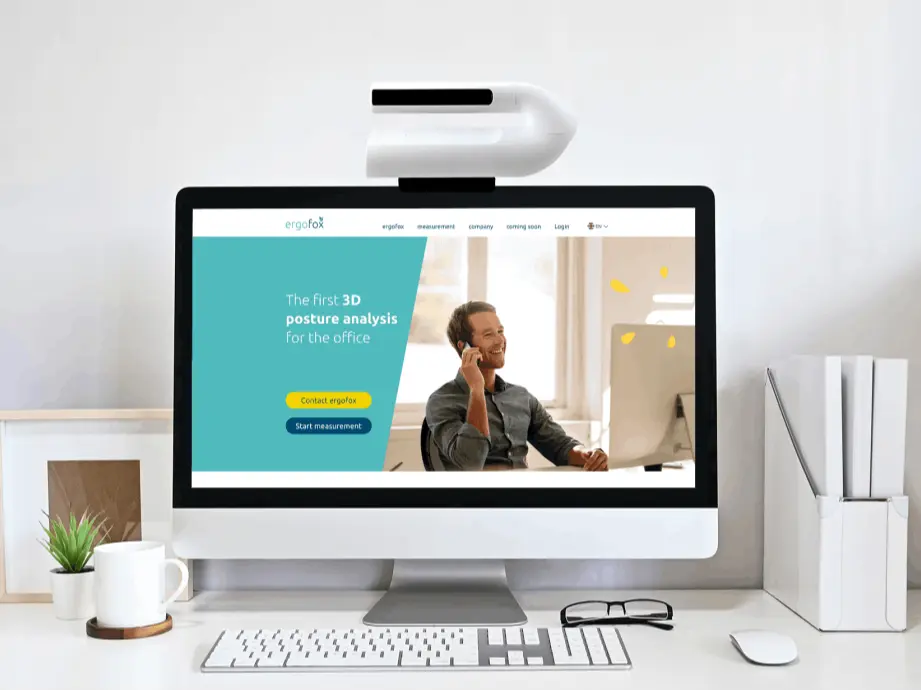

1. Measurement

Place the ergofox on your monitor and register quickly and conveniently using the device ID. The data is transmitted online so that the ergofox does not require complex installations. Within 2 days, a noiseless 3D sensor records your sitting posture at the workplace. You can continue your work processes as usual.

2. Posture Report

After the measurement, you will receive your individual posture report. The most common sitting postures are evaluated, analyzed and visualized in an appealing way for you. You will also receive selected tips and exercises for ergonomic sitting behavior.

3. Online-Coaching

You will also have access to our online coaching. With this you strengthen your back, gain knowledge about the topic of "healthy sitting" and achieve a sustainably healthier posture in everyday life. You can carry out the exercises directly at your workplace or call them up on the go - this way you remain flexible in terms of time and location.